I run a consultancy. That means my Monday morning is a pile of pipeline reviews, client work, training programmes, half-finished proposals, and a calendar that keeps re-arranging itself. I used to do all of it with a stack of cloud-hosted AI tools, ChatGPT in one tab, Claude in another, a couple of automation tools, an agent platform that promised everything and delivered most of it.

And it worked, mostly. Until it didn't.

This is a founder's note about why I stopped trusting that stack and built my own. Not because the cloud tools are bad, they're remarkable, and I still use them every day. But because at some point you start running your operating system through someone else's product, and the cracks start to show in places that matter.

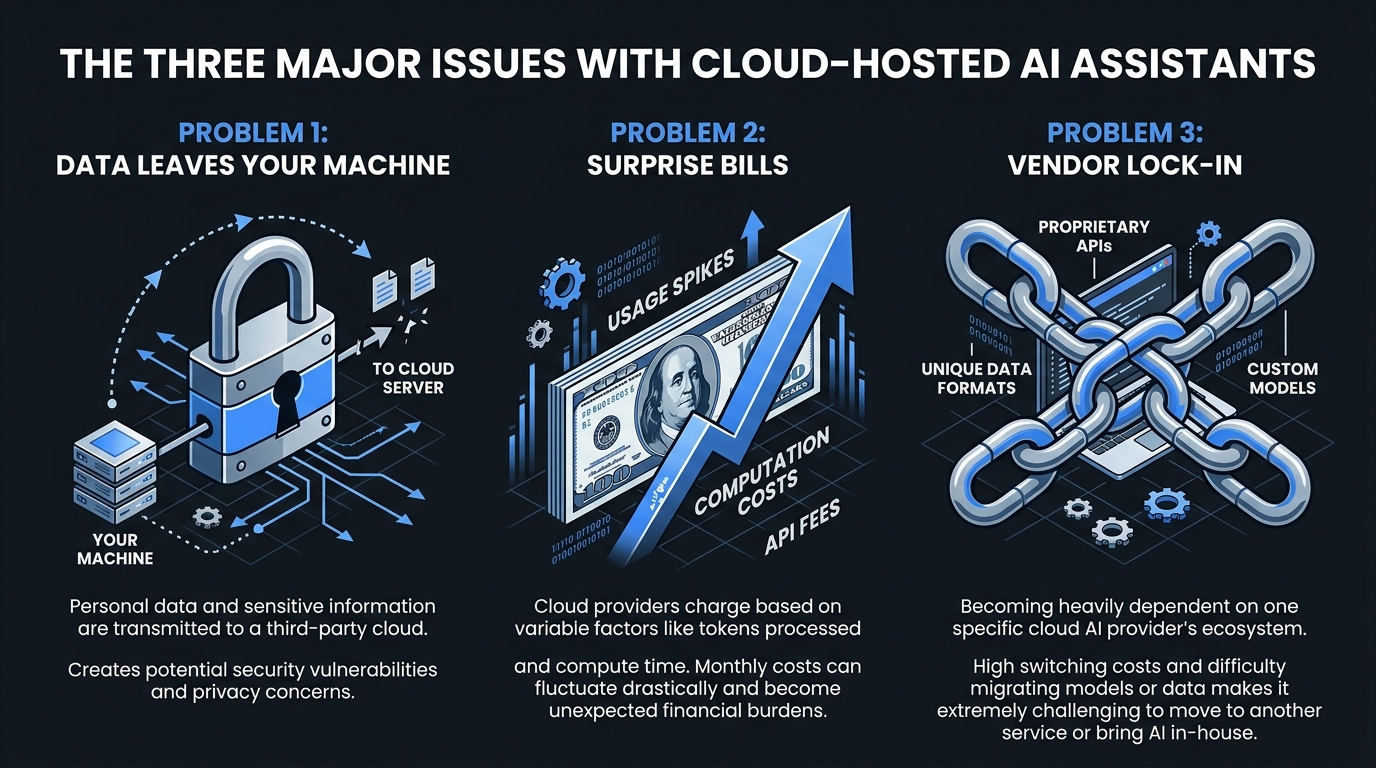

The three things that broke my trust

1. My data was leaving the room

Every prompt I sent was a small piece of context about my clients, my pipeline, my deals, and my decisions. Every response was a window into how I was thinking about each problem. None of it was inherently sensitive on its own, but stitched together over months, that's a remarkably complete picture of how my consultancy operates.

The vendors all swear they don't train on your data. I believe most of them, most of the time. But "I believe most of them most of the time" is not the standard I want my client confidentiality bound to. I want to be able to look at where my context lives.

2. The bills were getting weird

I'd start a month thinking I was on a £20 plan and end it owing £180 because I'd hit an API tier I didn't notice, or because a workflow I built kicked off a long-running task while I was on a call, or because some "free" feature decided it now had per-token pricing. Three different vendors, three different billing models, and at the end of every month I was effectively reverse-engineering what I'd spent.

For a consultant whose own value prop is "I'll make your AI spend predictable", that's embarrassing.

3. The lock-in was quiet, then loud

The first agent platform I committed to changed its pricing model six months in. The second deprecated the integration I depended on. The third was bought, and the new owners had different ideas about what the product should be. In each case I lost weeks rebuilding workflows on the next thing.

I was building my operating system on rented land. I wanted to own the codebase.

What I built instead

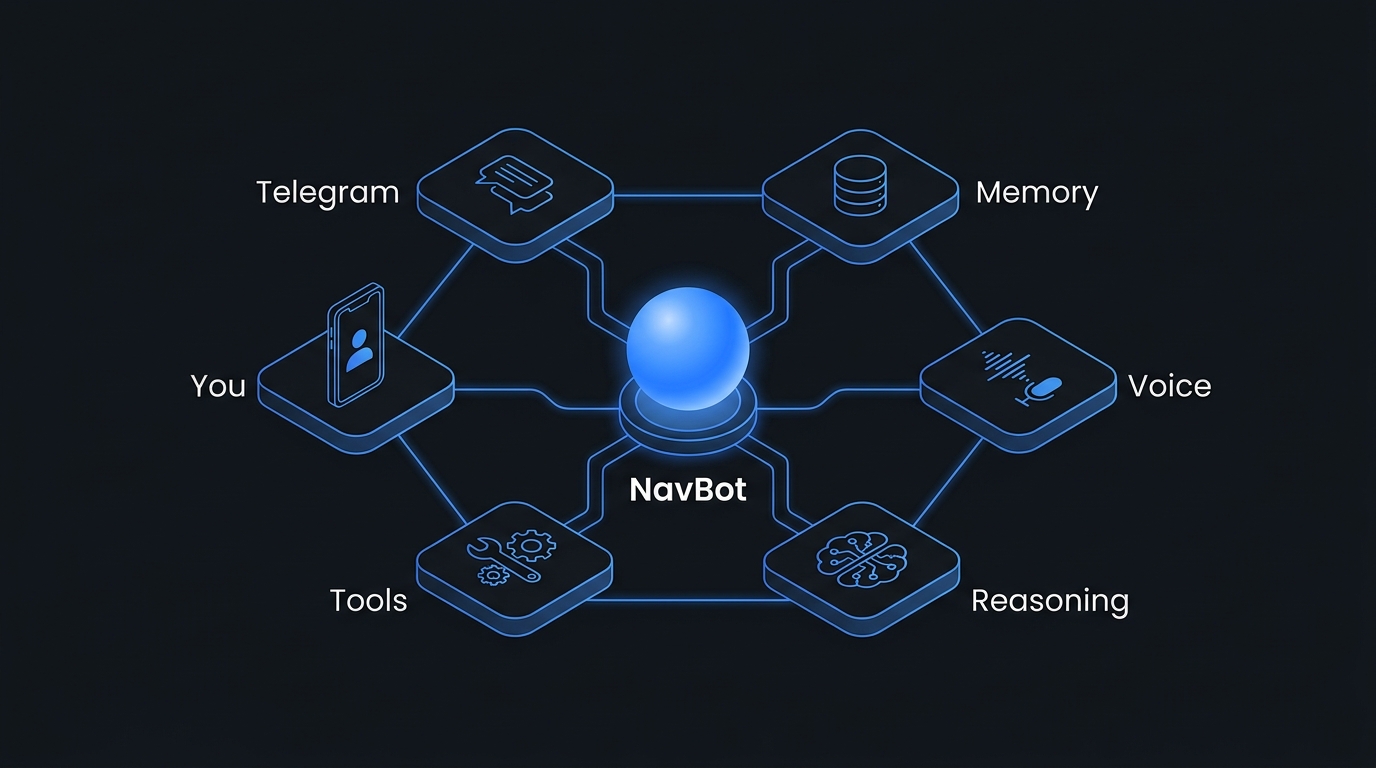

NavBot is what came out of about eighteen months of building, breaking, and re-building my own AI second brain. The shape of it is deliberately simple:

- One bot I message constantly. Telegram, because it's where I already live. It's always on. It knows me.

- One memory store I own. Postgres in a database I run, with embeddings for semantic recall. My context lives in a database I can open.

- One reasoning layer I can swap. Mostly Claude, occasionally a local model when I want zero round-trips. The bot picks the right one for the task.

- A handful of tools it knows how to use. Calendar, email, the pipeline, voice calls, web search. Each one a small, replaceable module.

- It runs on my own infrastructure. A modest VPS with a few moving parts. Predictable cost, predictable uptime, predictable behaviour.

That's it. There's no novel architecture here. Most of NavBot's code is the unsexy work of wiring well-understood pieces together cleanly. The interesting decisions are the ones between the boxes, what the bot remembers, what it forgets, when it asks for confirmation, how it escalates.

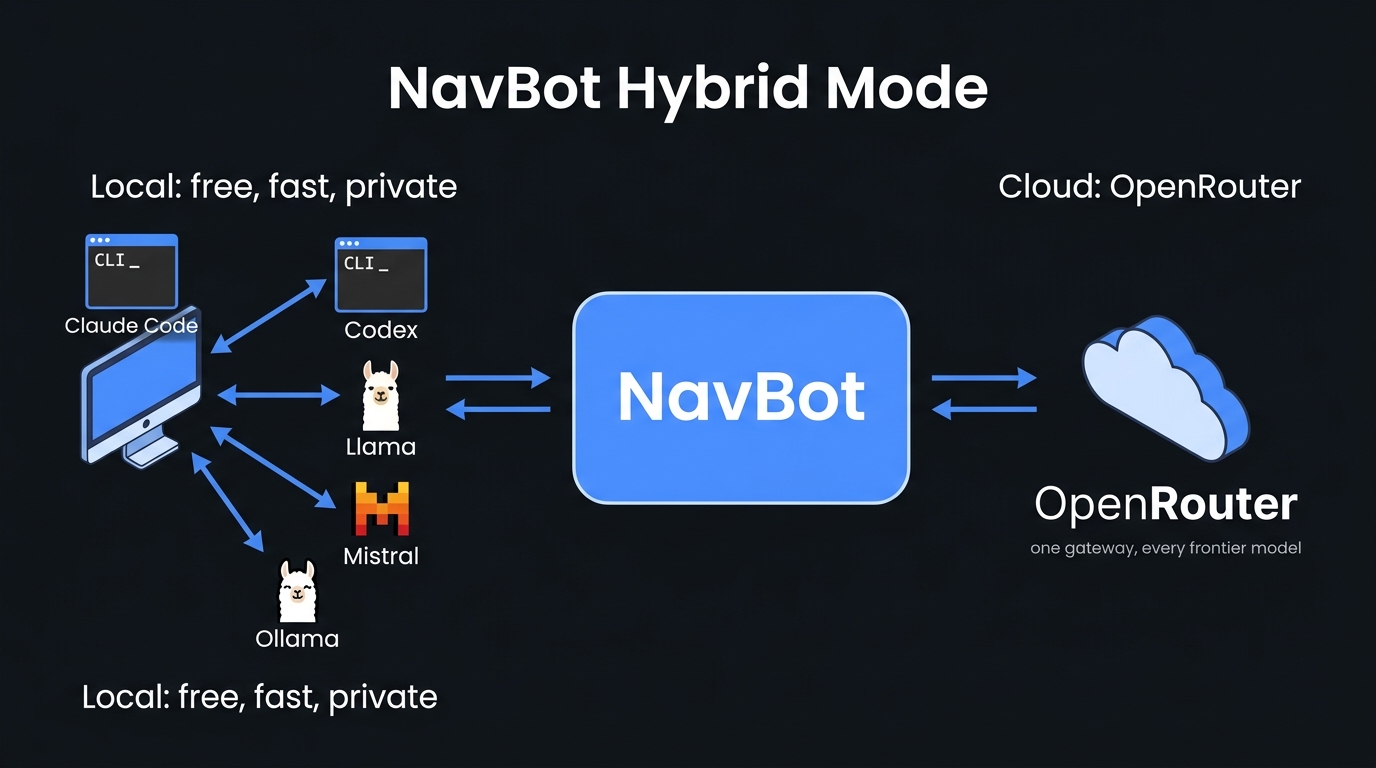

The hybrid model, local first, cloud when it matters

One thing I didn't expect when I started: how good the open-source models had got, and how much that mattered. Most of the things I ask my second brain to do, pull a meeting summary, draft a note, classify an email, restructure a list, don't need a frontier model. They need a competent one that runs locally for free, in milliseconds, without leaving the box.

NavBot routes intelligently: local models handle anything that doesn't need raw horsepower; the frontier models step in for the genuinely hard reasoning work. The result is that my monthly cloud spend is a fraction of what it used to be, my latency is lower, and my privacy posture is meaningfully better, without giving up any of the heavy-lift capability when I actually need it.

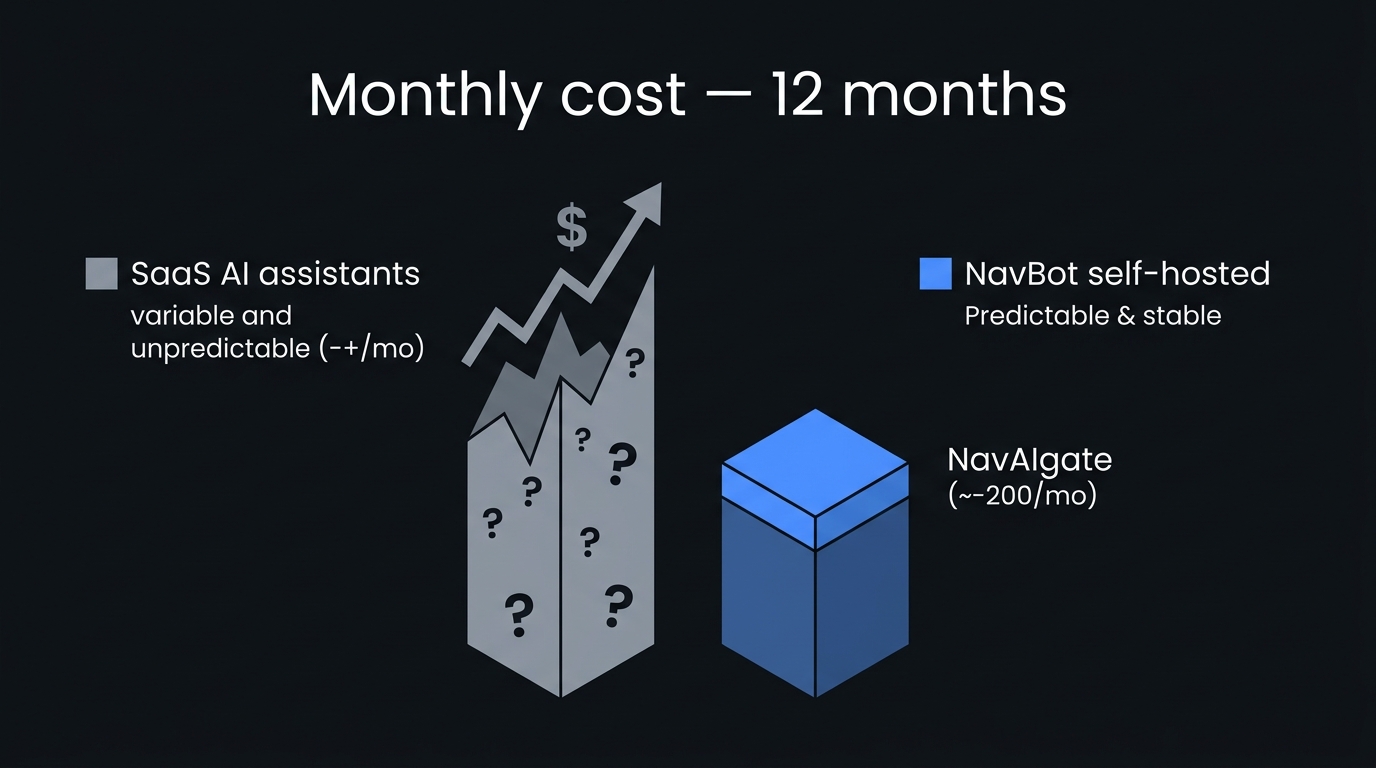

What it costs me to run

The single biggest unlock from owning your own stack is cost predictability. Not low cost, predictable cost. I now know almost exactly what NavBot will cost me each month. The variable component (frontier model API calls) is bounded by what I configured it to do, not by surprise pricing changes from a vendor I don't control.

For a consultant trying to model their own P&L, that turned out to be more important than the absolute number.

Who this is for (and who it isn't)

NavBot is not a mass-market app. I'm not trying to ship 10,000 of them. It's the operating system of one consultant's brain, packaged for the small group of operators who'd rather wield a sharp tool than a soft one.

It's for you if:

- You're a sales leader, founder, consultant, or senior operator running real numbers

- You'd rather own a codebase than rent a SaaS subscription

- You have ~30 minutes a day to build the muscle

- You believe AI should disappear into your week, not become another tab to manage

It's not for you if:

- You want a no-code "set and forget" tool

- You're looking for the cheapest possible option

- You don't want to learn anything technical

- You want someone else to run AI for you (we have a different offer for that, see L9)

The three ways in

NavBot lives inside the NavAIgate community. There are three doors:

- L7, Annual member (self-serve). Pay annually, get the full source code, the setup docs, and the community to lean on while you deploy it. The fastest way in. Most operators come this route.

- L8, White-glove. Same NavBot, but I sit down with you 1-on-1 and deploy it on your stack. Earned through Inner Circle activity or purchased as an upgrade.

- L9, AIOS Completionist. Come in person to the AIOS Training Programme, a one-day onsite workshop in EU, US or the Middle East, in small groups of 6 to 8 operators. Walk out with NavBot already running, plus ongoing premium access. Top of the ladder.

NavBot is currently in private build. Joining the community now puts you in the founders' cohort, early access at launch, locked-in pricing forever, and a direct say in what ships first.

One last thing

Everything I've written here came out of running my own consultancy. None of it is theory. NavBot is the same system I run my pipeline through, write my training programmes with, and have running in the background of every meeting I take.

If that sounds like what you're looking for, the door is open.

, Daniel

The door

Join the NavAIgate community.

The community lives on Skool. NavBot lives at L7+. The path is published.